How well does Outlook 2016 run compared to Outlook 2011?

The Big Question

If you are a Microsoft Office user on the Mac, you’ve heard the news about the Office 2016 for Mac preview. For people like you, it’s big news — the first major upgrade to Office for Mac in five years. While applications such as Word, Excel and PowerPoint are in “Preview” status, Outlook 2016 for Mac has been shipping since October, 2014.

The new Outlook is a complete re-architecting of the internals of Outlook: the database. As you may know, Entourage (in Office for Mac 2008 and prior) had very large databases, and had to duplicate data to support Apple’s Spotlight. Outlook for Mac 2011 was the first version of Outlook on the Mac (at least in a very long time). Outlook 2016 for Mac, however, was designed for performance from the ground up.

As you may remember, MacTech did extensive benchmarking on previous versions of Microsoft Office (2004 on Intel Macs, and 2008, and 2011). The big question, therefore, is just “How much faster is Outlook 2016 when compared to Outlook 2011?”

To answer that question, we put Outlook 2016 (version 15.8) through its paces on Yosemite 10.10.2, on a variety of Mac models to give you a good idea of how things perform based on the type of machine you are using. With about 3,000 tests, we looked at a variety of ways that Outlook performs.

[nextpage title=”Overview”]

We won’t keep you in suspense. In general, Outlook 2016 is significantly and noticeably faster than Outlook 2011 in a variety of areas.

There were some surprises, however, on areas that felt faster, but weren’t when clocked with a stopwatch, providing an interesting lesson in user speed perception vs. reality.

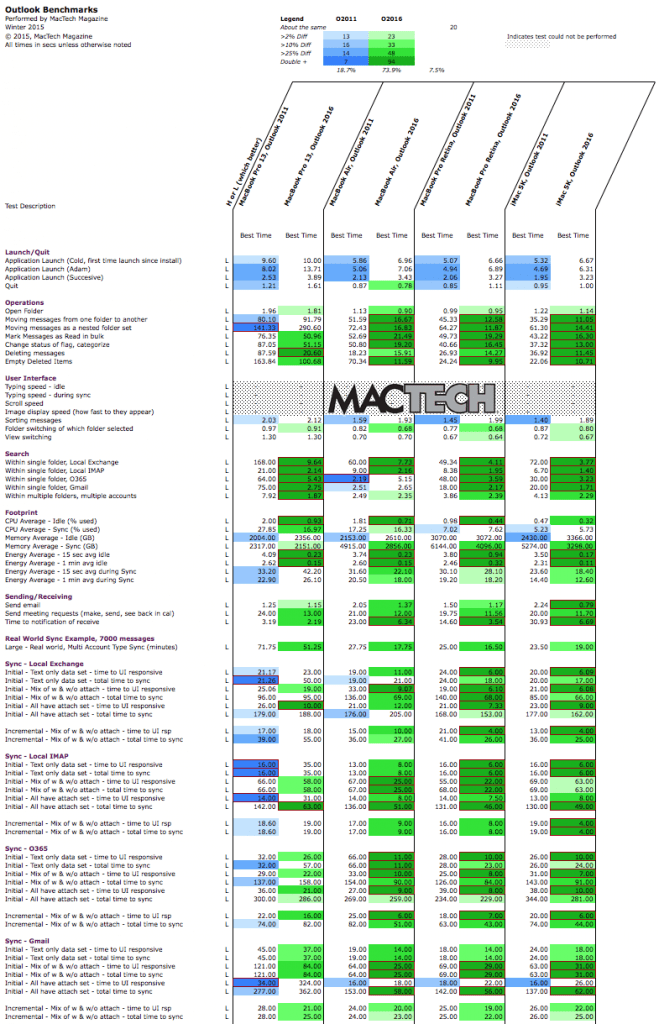

Before we get into details, let’s take a look at an overview of the results. With thousands of tests, we find it most representative to see it visually with MacTech’s trademark colored worksheets. The worksheet below color codes which of the two versions is fastest on each of the models tested. The darker the cell, the more it won by.

Figure 1: Outlook 2011 vs. Outlook 2016

(Colored cells mean fastest of grouping. Darker colors indicate even faster.)

As you can see, 2016 is faster almost across the board. There are places that it’s not as quick, but they are relatively few and far between.

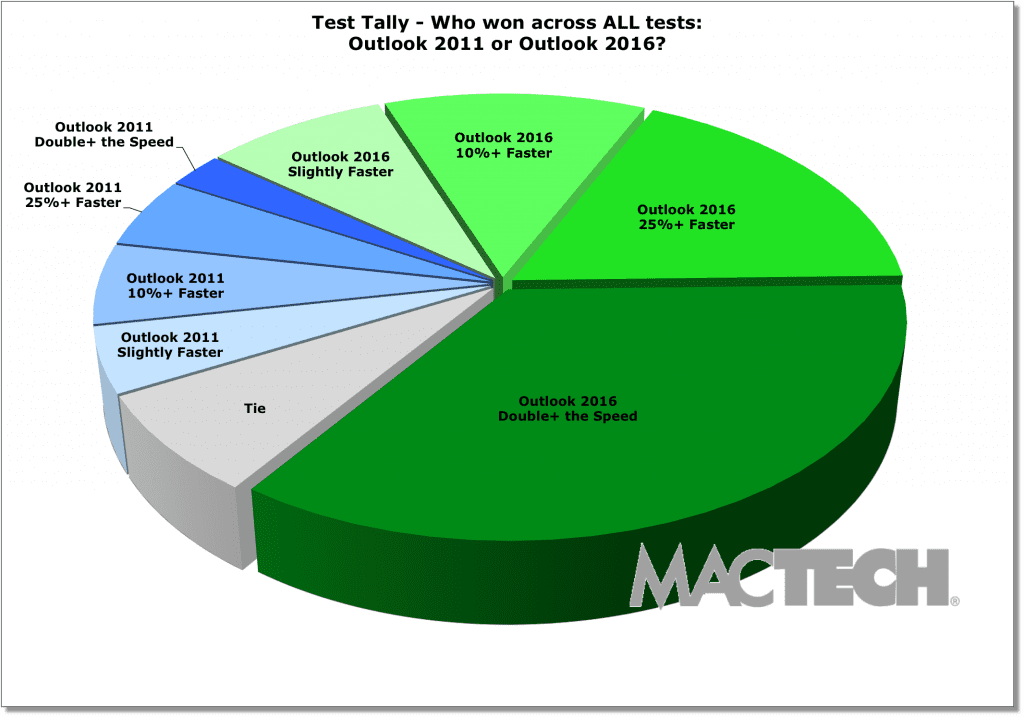

A different way to look at it is by how many times one application was faster than another and by how much. Colors match the spreadsheet, but this gives you an idea on the counts of tests. Sync tests, arguably the most important performance feature for an email package, makes up about half the count.

Figure 2: Outlook 2011 vs. Outlook 2016

Test Tally: Which application won more tests

(Colored cells mean fastest of grouping. Darker colors indicate even faster.)

[nextpage title=”Top-Level Results”]

Based on our extensive testing and benchmarking, Outlook 2016 is noticeably, and significantly faster than Outlook 2011. If you are using Office for Mac, you should seriously consider moving to Outlook 2016. Assuming your mail is hosted on a server (e.g., not POP accounts) there’s no reason to not at least try it out, and see what you think yourself.

We’re going to dive into detail on each set of tests below, but let’s give you an overview of what Outlook 2016 looks like when compared to Outlook 2011.

- Outlook 2016 used fewer resources at all times, often as much as 20-40% less CPU and memory compared to Outlook 2011.

- When idle, Outlook 2016 consumes next to no energy extending the battery life for all types of Outlook users.

- Outlook 2011 was a bit faster than Outlook 2016 in time to launch and quit, but Outlook 2016 did well enough. It’s a worthwhile tradeoff for getting the new application architecture version, and benefits now as well as those to come.

- Outlook 2016 is fast in everyday operational tasks from opening folders, moving messages, marking messages as read, deleting messages, and even changing the status of flags or categorizing. How much faster? Often double or more when compared to Outlook 2011.

- Outlook 2016’s new modern database architecture (SQLite) gives not only the ability for incredibly powerful, multi-faceted searches, but searches that are 2x-15x faster!

- In looking very deeply at sync times — what many would consider the most important performance aspects of a mail client — Outlook 2016 was not just faster it got the job done in a 1/3 less time than Outlook 2011 on our “real world testing.” This suite included a large, multi-account sync that included local Exchange and IMAP accounts (on premise server) as well as Office 365 (hosted Exchange) and Gmail.

- In the sync section below, you can see many more granular tests across each of these types of accounts, but as a preview:

- Sync with attachments was much faster given Outlook 2016’s new “three pass” approach of getting headers, then message body, then attachments.

- Local Exchange accounts synced faster. Generally some to as much as double the speed.

- All Local IMAP tests were faster, but of particular interest was that messages with attachments synced 2x-2.5x faster.

- Office 365 accounts synced faster across the board, from some faster to 2.5x faster.

- Finally, Outlook 2016 was 2x or more faster than Outlook 2011 when using Gmail.

- Sync with attachments was much faster given Outlook 2016’s new “three pass” approach of getting headers, then message body, then attachments.

While beyond the scope of this benchmark, we also wanted to look at the strain that Outlook 2016 put on servers. It was clear that Outlook 2016 not only lives by the rules, but that it’s quite kind to servers in a way that given the above speed improvements, the servers seem to respond in kind with.

Outlook 2016 has completely rewritten internals, and the performance is clearly there. With something on the order of one update per month, Microsoft has already demonstrated a commitment to quickly responding to users with new features, and bug fixes. It’s a pace that one expects from a small developer, and impressive from a company that’s the size of Microsoft. And, we expect to see the integration of Outlook gets better and better as new features are added to the all-new underlying engine.

[nextpage title=”Test Environment”]

When we were choosing computer models, we set out to choose not the fastest models, but ones that would be a good representation of what most people may have—common machines with a variety of drive types and processing power. For anyone that may be purchasing machines, this will give you an idea of how you can maximize performance for your users.

Specifically, these are the machines that we used with basic specs, pricing and CPU mark to give you a better idea on the model and it’s inherent performance.

- 13″ MacBook Pro, entry level w/5400 rpm HD, 4GB RAM, 2.5GHz i5 processor

This machine retails for $1100, and scores a 3799 CPU Mark

- 13″ MacBook Air, performance model, SSD, 8GB RAM, 1.7 GHz i7

This machine retails for $1450, and scores a 4178 CPU Mark.

- 15″ MacBook Pro, integrated graphics model, SSD, 16GB RAM, 2.5 GHz i7

This machine retails for $2100, and scores a 9433 CPU Mark.

- iMac 27″ 5K, performance model, Fusion Drive, 8GB RAM, 4.0 GHz Core i7

This machine retails for $2750, and scores a 11289 CPU Mark.

The test bench included configurations of OS X Yosemite 10.10.2, with Office for Mac 2011, and Outlook 2016 for Mac version 15.8 installed, each with the most up-to-date patches. All installations were completely clean installations of both OS X and Microsoft Office.

[nextpage title=”Specific Testing”]

We ran thousands of tests. Those tests were organized into several groups (and even sub-groups) including:

- Launch/Quit

- Operations

- Searching

- CPU and Memory Footprint

- Energy Footprint

- User Interface Performance

- Sending, Receiving and Notification Performance

- Sync Performance

Sync is one of the most important performance factors in an email client. With that in mind, we looked deeply at sync for four different types of email accounts:

- Local Exchange (on premise Exchange server)

- Local IMAP (on premise Exchange server with IMAP account)

- Office 365 (hosted Exchange)

- Gmail (accessed via IMAP)

Finally, we looked briefly at the mail client’s impact on servers. Let’s dive into each of these more deeply now.

[nextpage title=”App Launch and Quit”]

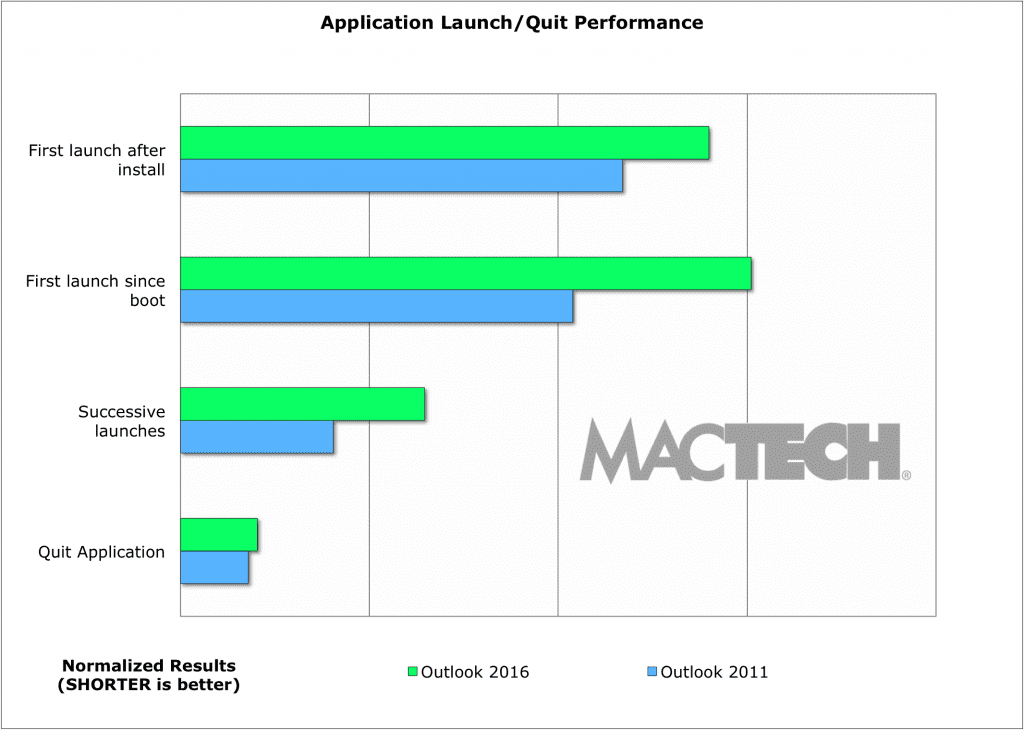

We wanted to see how Outlook did with the very first launch (unconfigured, post-install initial launch). More importantly, we wanted to time the first launch after an OS boot, which clears caches, and then successive launches. In all cases, Outlook 2011 was faster. Quitting the application was close, but 2011 still edged out 2016.

It’s common for applications to do things that give the user the perception of faster launching. As such, MacTech tests end when you can actually start doing something in the application. That way, we’re measuring both the reality and the user perception.

As we said, one of the biggest differences in Outlook 2016 is that it has a new architecture, but this isn’t just about the underlying database, there are other major internal changes. This not only helps meet Apple’s requirements for sandboxing, but more importantly, Outlook 2016 has adopted security and other architecture that’s consistent with the rest of the Office for Mac suite. The new Outlook adheres to these, and other goals that Microsoft had, but the downside is that it does not launch as quickly as Outlook 2011. It’s not bad, mind you, but it’s not quite as fast at launches, and quitting the application. For most people, this won’t matter as people typically launch an email application and leave it open. The first benefit, which you can already see, is improved Information Rights Management (IRM) support, but we expect to see more benefits coming from this new architecture in the future.

Figure 3: Launching Speeds

(shorter is faster)

[nextpage title=”Operations”]

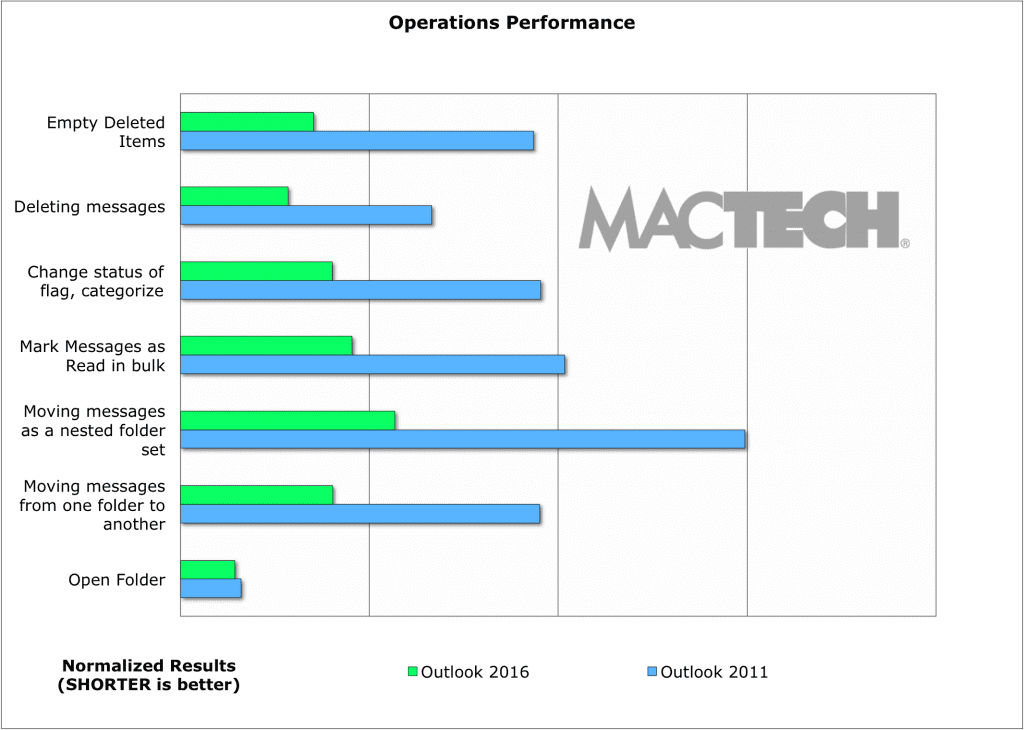

Outside of launching and syncing, there are some operations that are very common for users. To represent these, we tested the following:

- Opening folders

- Moving messages from one folder to another

- Moving messages to a nested folder set

- Marking, in bulk, messages as “read”

- Changing the status of flags or categorizing, in bulk

- Deleting messages

- Emptying deleted items

It’s not surprising, given the new “engine” under the hood in Outlook 2016, but across the board, Outlook 2016 was much faster in almost every item above. Even in the case of the already fast “open folder,” it was still faster there as well.

Figure 4:Operations Performance

(shorter is faster)

[nextpage title=”Searching”]

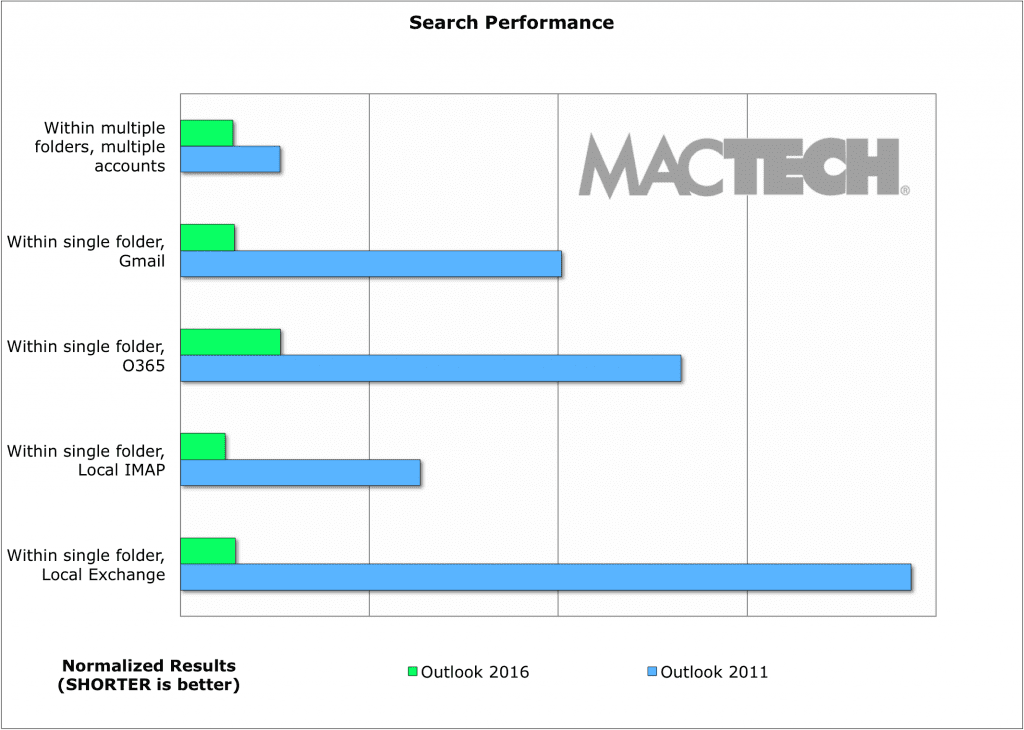

One of the biggest benefits to the new underlying technologies for Outlook 2016 is that it’s using a modern SQLite database. The true power of this is best shown in searches. Here, as expected, Outlook 2016 not only was faster — but was multiples faster across the board on every type of search.

The chart will best visualize how much faster, but in our tests, searching was 2x-15x faster.

Figure 5: Search Performance

(shorter is faster)

[nextpage title=”CPU and Memory Footprint”]

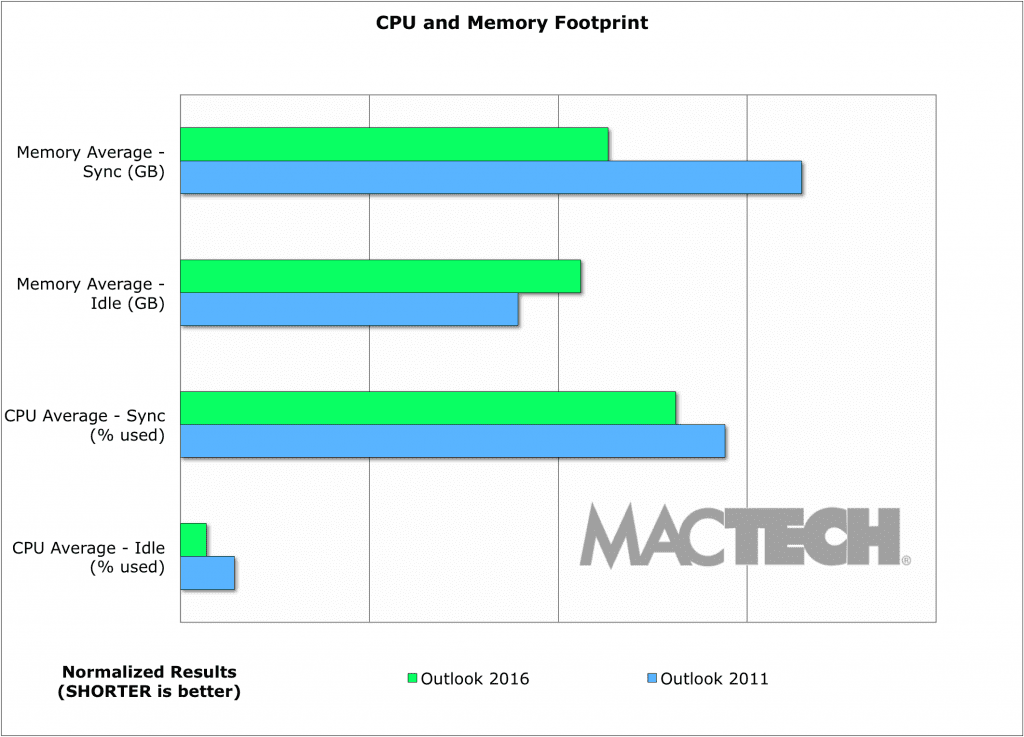

In today’s mobile world, resources matter. CPU and memory use are important to the average user. It translates into how many apps can be open and how quickly you can switch, and it impacts the overall usability of the computer.

We took a look at memory and CPU usage during idle and during a sync process. This wasn’t just for the app, but took into account additional resources that Outlook may be relying on. In nearly every case, Outlook 2016 used fewer resources. How much less? Often 20-40% less.

Figure 6: CPU and Memory Footprint

(shorter is faster)

[nextpage title=”Energy Footprint”]

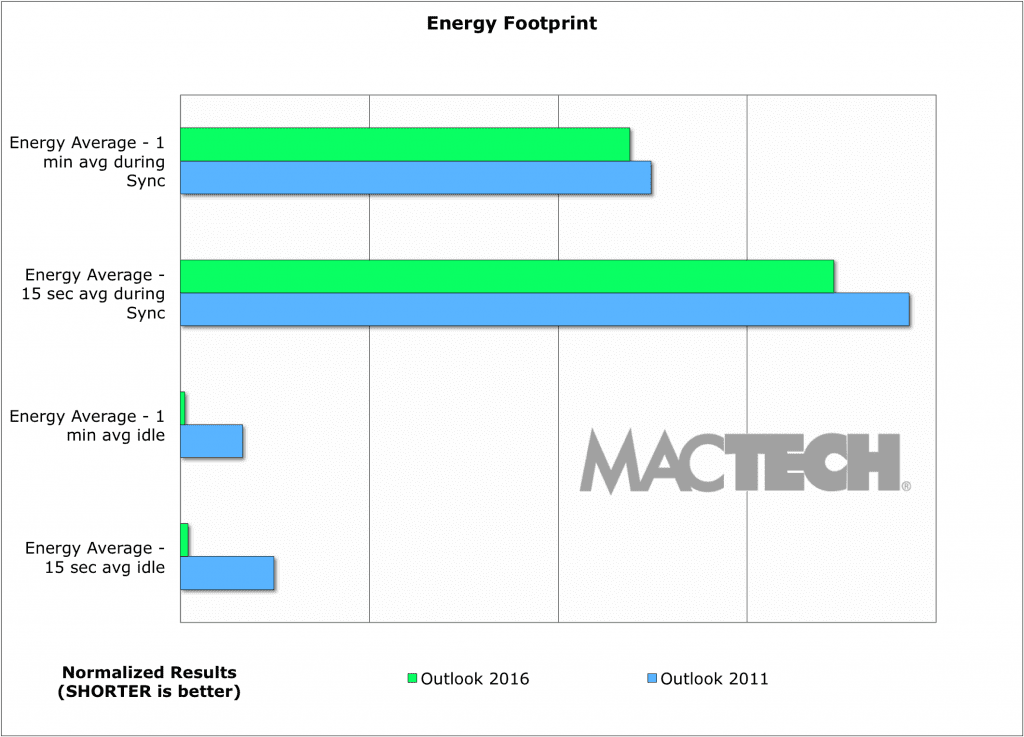

In today’s mobile world, the energy footprint for an application may be the most important. We took a look at a few scenarios for energy usage in Outlook, and then measured them with several hundred samples so that we could get a true feeling for the energy being used.

Most of the time, a mail application is idle. In this case, Outlook 2016 consumes next to no energy, extending the battery life for any user. Sync would be the second most frequent “state,” and here, Outlook 2016 is slightly better as well.

Figure 7: Energy Footprint

(shorter is faster)

[nextpage title=”User Interface Performance”]

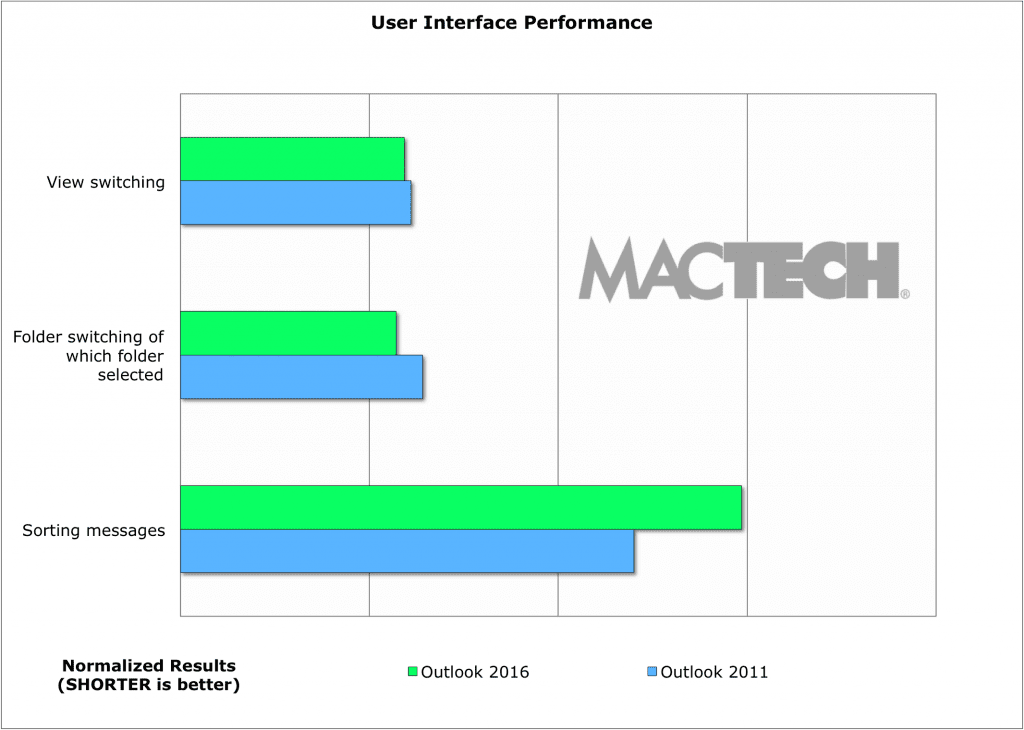

Outlook 2016 has taken the previous interface and cleaned it up alongside with the underpinnings changes. Much of the user interface feels snappy. In fact, a number of the items we tried to test were so quick that we were unable to catch them with a stopwatch. Even those items that took a bit longer, such as sorting, were slower by tenths of seconds.

Now despite being able to test with a stopwatch tasks such as typing speed, scroll speed, and scrolling, several of the items were smoother in Outlook 2016. For example, when you bring up a new message window in Outlook 2011, and start typing, you may find the characters come out in a somewhat choppy way at first. That’s not the case with Outlook 2016; it’s smooth from the start.

Figure 8: User Interface Performance

(shorter is faster)

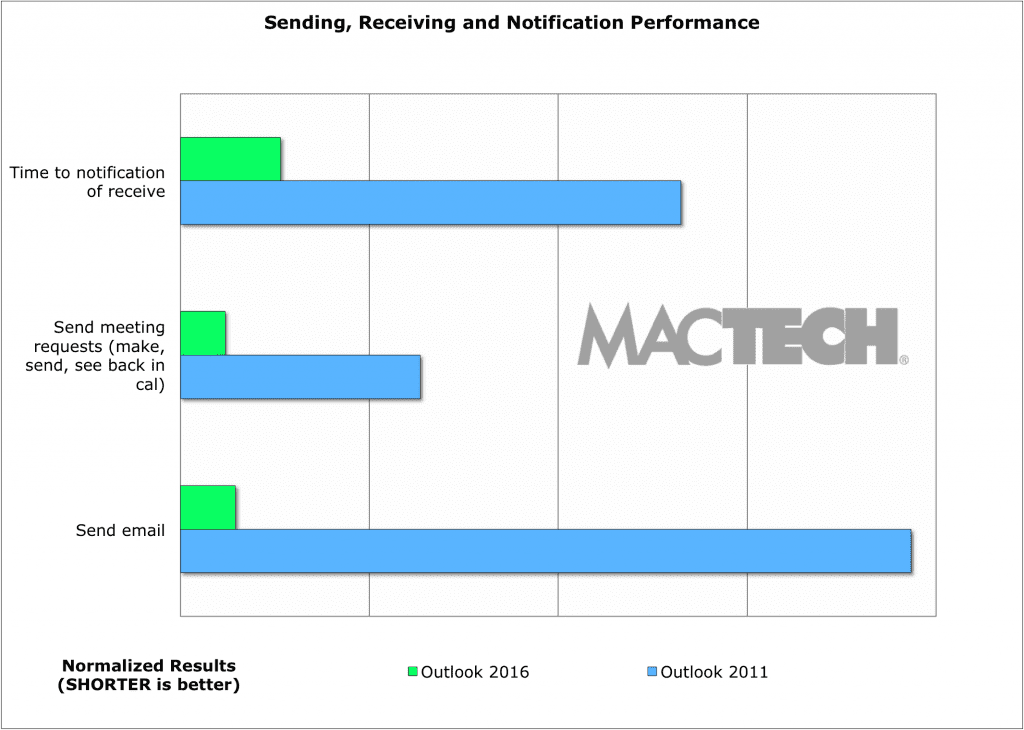

[nextpage title=”Sending, Receiving, and Notification Performance”]

In Outlook 2011, it could take a while to receive a message even if it were on an Exchange account. The reason is that Outlook was polling the server periodically for new messages.

Outlook 2016 does true push from the server, and as a result the time to notification of a received message, and the speed of meeting requests making their way through the process are significantly an issue. Together, these presented a feeling of a much more up to date, and snappy interface. Overall, we found Outlook 2016 to be about 5x faster in receiving messages than Outlook 2011.

Also, it isn’t just on the receiving side. Outlook 2016 sends emails a great deal faster as well — 15x faster.

Figure 9: Sending, Receiving, and Notification Performance

(shorter is faster)

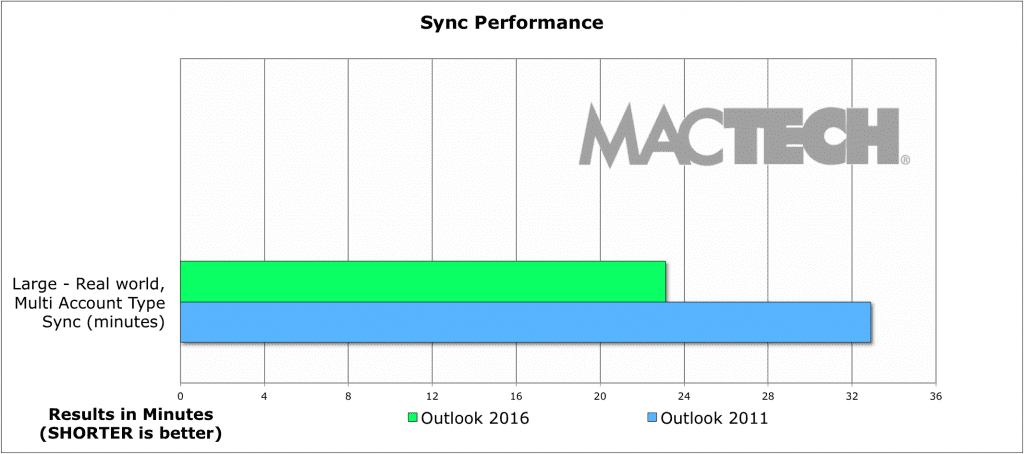

[nextpage title=”Sync Performance”]

For most users, particularly heavier users, sync performance is important in an email application. Outlook 2016 went about syncing in a different way than Outlook 2011 does. First, 2016 prioritizes what it needs to sync based on what it thinks you, the user, wants. For example, if you click on a folder, that becomes the priority to sync. Click on several folders? It will sync them in what it believes is the best priority.

Within a folder, Outlook 2016 uses a three-pass method: grab the headers, grab the message text, and finally, grab the attachments. If you click on a message, that message will become the priority to sync first.

We wanted to give you an idea of a real world, larger sync. For each of four types of accounts: local Exchange, local IMAP, Office 365 Exchange, and Gmail, we had a set of folders: one with 5000 messages, and three more folders that had about 600 messages each. The later set of folders was either no attachments, all with attachments, or a mix of messages with and without attachments. In all, about 7000 messages for each of four types of accounts, or almost 30,000 messages on this large, multi-account, real world sync.

Outlook 2016 was not just faster, but it synced all the messages, and got the job done in a 1/3 less time.

Figure 10: Sync Performance — Real World Test

(shorter is faster)

We also wanted to try tests that would reflect a solid amount of mail. For example, for a high volume user that hasn’t checked email for much of the day. To do this we created the three folder sets of about 500 messages. One had no attachments. One had a mix of messages with attachments. The third had messages that all had attachments.

For the initial sync, the folder went from empty to filled with these messages. We timed two things; when the messages were user interface responsive, and the total sync time. User interface responsive means that we could see the message in the list, click on it and work with it.

For the incremental tests, we added about 200 to the folder that had a mix of messages with and without attachments. The incremental messages were also a mix.

The following are what we saw for each of the four types of accounts: Local Exchange, Local IMAP, Office 365 Exchange, and Gmail. All had the same tests run as described above. All the charts have the same normalization scale, so that you can compare one type of email account to another.

One thing to note about the difference in the progress bars for Outlook 2016 and 2011: 2011 often downloads in batches of 20 messages. It does this to be “kind” to the server it’s talking to. You see 20, then another 20, then another 20, and so on until it’s caught up. Outlook 2016 also downloads in batches of 20, but the progress bar correctly shows the entire amount of messages to sync, not just the status within the current set of 20.

[nextpage title=”Exchange vs. IMAP”]

As you read on, you’ll notice that IMAP is frequently faster at syncing than Exchange accounts. This is not actually a fair comparison. Why? IMAP is actually much lighter weight. Exchange does a lot more than IMAP. There’s XML metadata with Exchange syncs that is not present with IMAP, so the transactions are larger on Exchange syncs.

Of course, you get benefit from using Exchange accounts that IMAP simply does not support including online archives, delegation, syncing categories, client support for updating rules, free/busy support, and coming soon, propose new time support for scheduling. You also get streaming notifications, which means much faster notification.

In addition, you get a far more reliable sync — one that rarely sees errors, or has issues — and you just don’t see Exchange accounts have problems such as the way IMAP can so easily get confused.

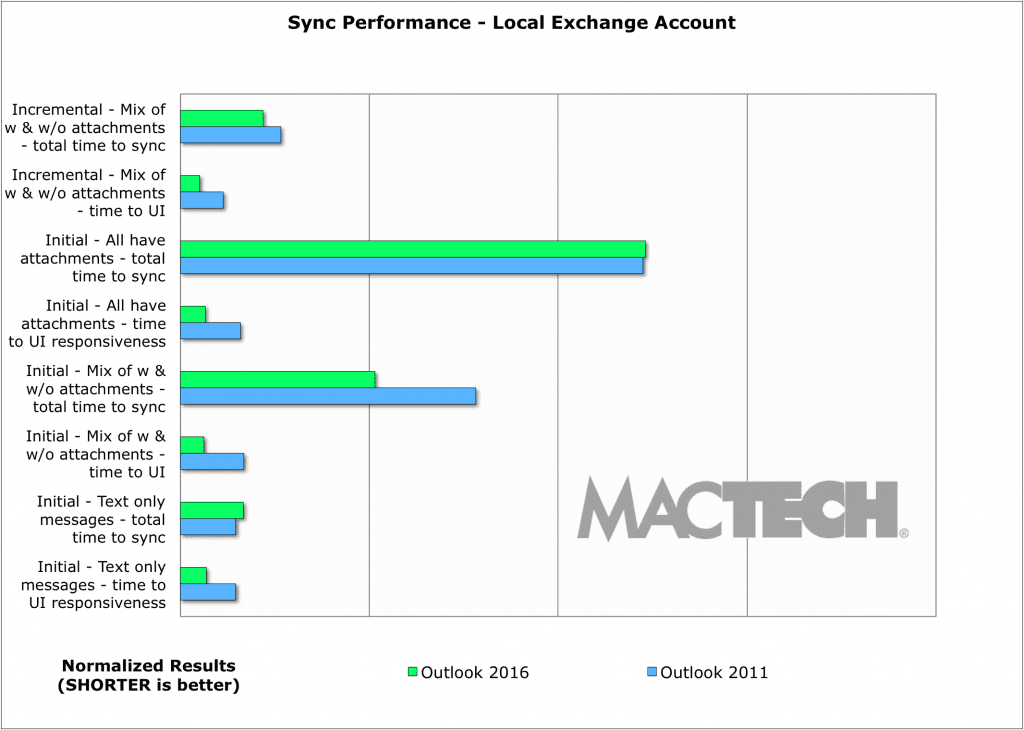

[nextpage title=”Local Exchange Sync Performance”]

Sync performance for an Exchange account that’s on a local server (e.g., an Exchange server on the same network as the client), works well in Outlook 2016. A couple of things have 2011 and 2016 on par, but for the most part, there’s a solid speed improvement in Outlook 2016 when compared to Outlook 2011.

Exchange performance was reliable, consistent, and we never saw any issues with messages partially loaded, or missing.

Figure 11: Sync Performance — Local Exchange Account

(shorter is faster)

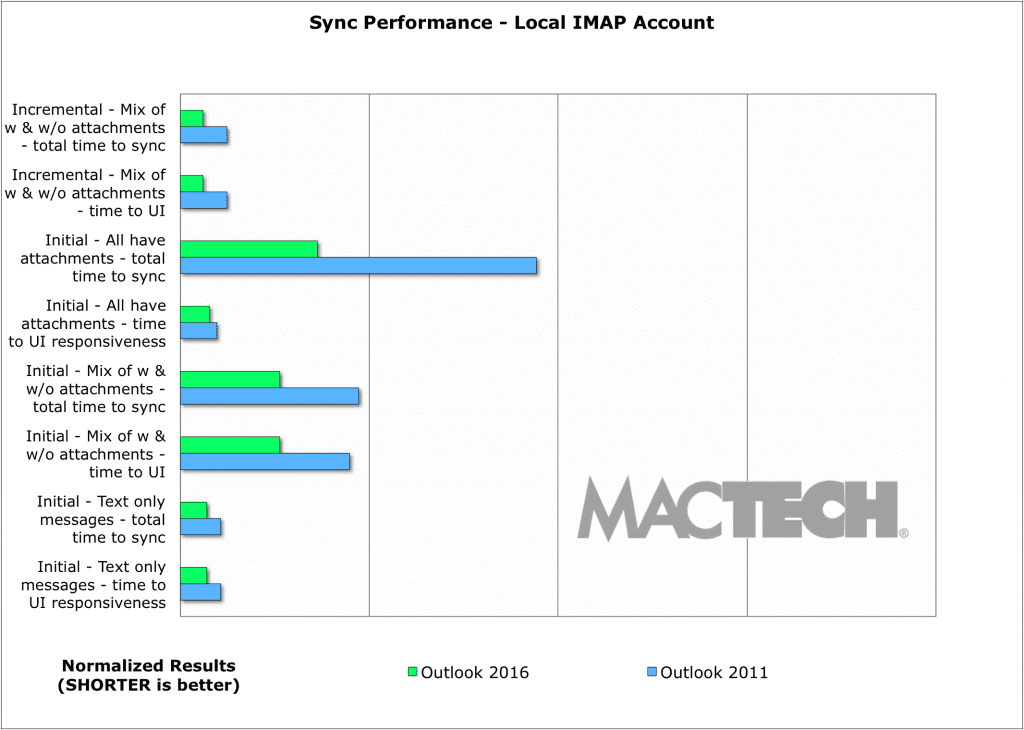

[nextpage title=”Local IMAP Sync Performance”]

Sync performance for an IMAP account that’s on a local server (e.g., an IMAP server on the same network as the client), works well in Outlook 2016. Outlook 2016 was across the board faster for local IMAP. Especially for messages that had attachments, we saw Outlook 2016 run 2x-2.5x faster.

Local IMAP accounts worked well. While we would sometimes see syncing issues on both Outlook 2011 and 2016, they would resolve typically on the next sync. The tests were fairly consistent as well.

Figure 12: Sync Performance — Local IMAP Account

(shorter is faster)

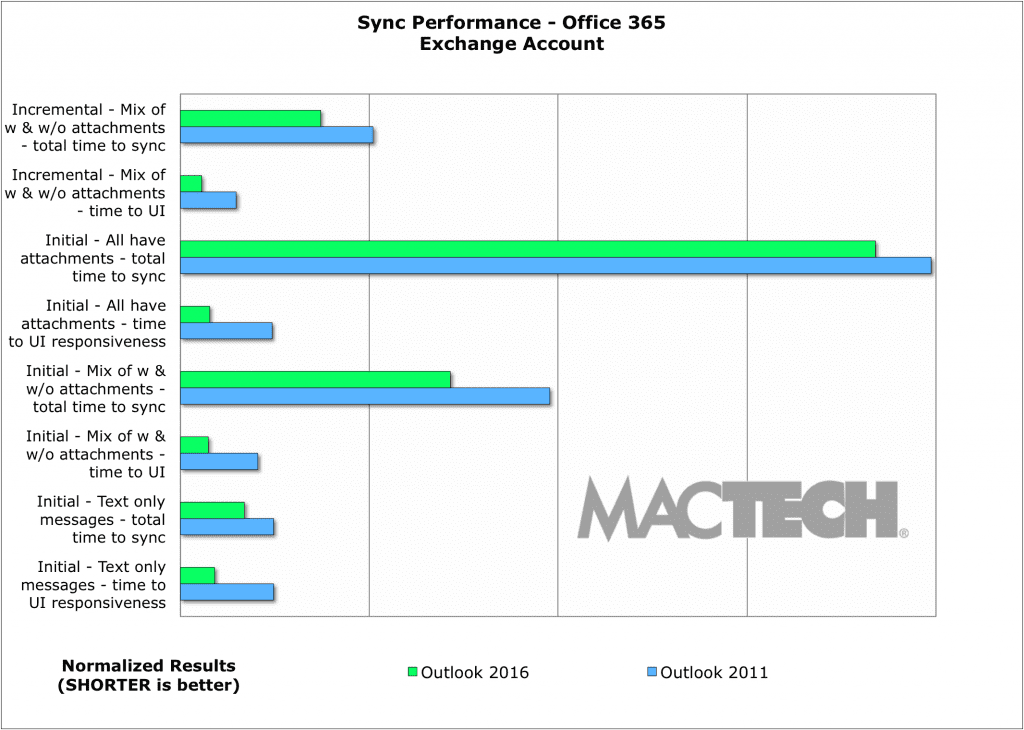

[nextpage title=”Office 365 Sync Performance”]

As a hosted service, Office 365 can be a challenge to test. With Office 365 Exchange accounts, it was one of the most rock solid hosted account experiences we’ve seen. We didn’t see any sync issues. Across the tests we saw Outlook 2016 sync noticeably faster than Outlook 2011 when accessing Office 365 accounts.

Figure 13: Sync Performance — Office 365 Account

(shorter is faster)

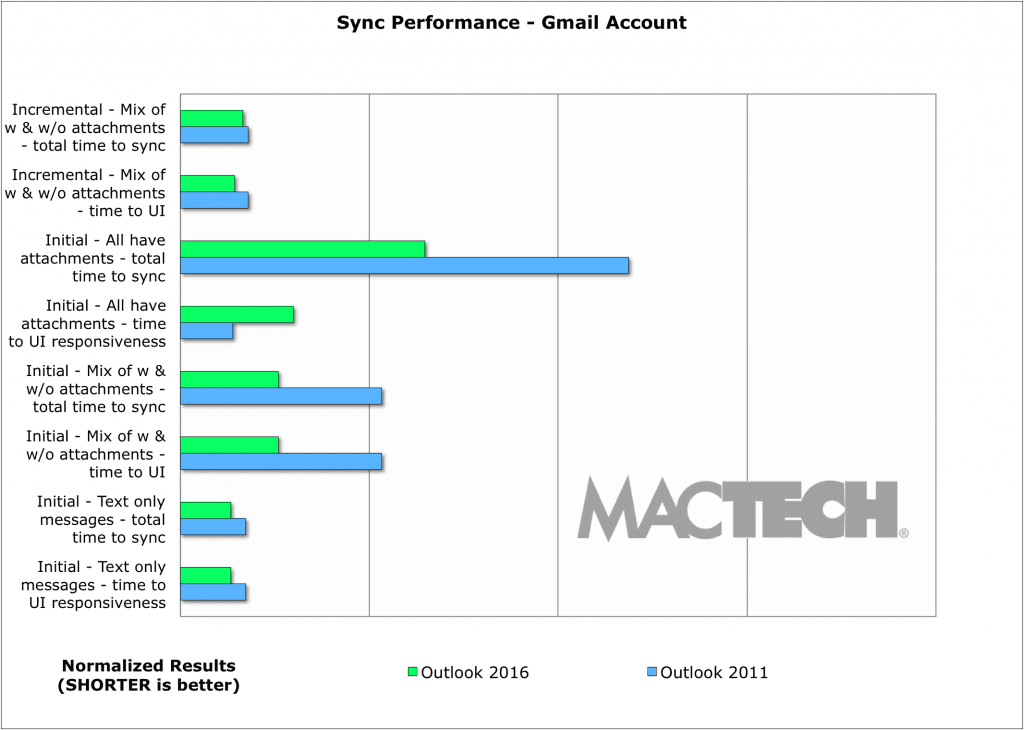

[nextpage title=”Gmail Sync Performance”]

With so many people on Gmail, we wanted to see how the new Outlook ran with it. For the most part, we saw significant speed improvements — 2x or more — for Outlook 2016 over Outlook 2011.

We should pause here to note that testing and working with Gmail was more than a little challenge. Google regularly throttles performance, and does so at low enough usage levels that impact power email users using any external client. This is why migrating to Gmail with a lot of folders is so hard, and time consuming. The following tests are the best-case scenario we found with Gmail, when (we believe) that Google was not throttling our accounts.

Furthermore, Gmail’s implementation of IMAP is non-standard. And, regardless of email client, you may find that Gmail won’t always show you the correct counts for mail messages. This is not the fault of the email client, but Gmail’s implementation of IMAP.

When you have so many different examples running side by side, you see things from a different perspective — sometimes quite clearly. While it was not part of our goals in this article, and it’s not benchmarking at all, we can tell you that hands down, the experience with Office 365 hosted exchange accounts was far more reliable, consistent, and predictable than the experience with Gmail performance with non-standard IMAP, performance throttling, and sync inconsistencies. We don’t believe these issues appear in Gmail’s web interface, but they do apply to any email application sending and receiving through a Gmail account.

Figure 14: Sync Performance — Gmail Account

(shorter is faster)

[nextpage title=”What about Mail.app?”]

There may be the natural inclination to compare an application like Apple’s Mail.app to Outlook. The problem is that this is like comparing apples to oranges. Mail doesn’t have quality searching. Mail is for mail only, and doesn’t integrate calendar and contacts — those are separate apps. Mail has issues with confusing threads, application instabilities, crashes, and more.

Apple Mail also doesn’t have a whole series of features inherent to the Exchange protocol that Outlook does including online archives, delegation, syncing categories, client support for updating rules, and free/busy support. Mail also doesn’t support things like “streaming notifications” where the server tells the client that there’s new mail and you can even see new mail while you are syncing. In Mail.app, you have to wait for the next check of the folder. Not supporting these impacts performance — Apple Mail is a lighter weight application in many ways. That said, many if not most Apple Mail users report “crazy” (e.g., very high) levels resource usage.

Mail doesn’t consistently download mail either — often skipping a full download of a message until it gets to it later. Unfortunately, you don’t get to control when “later” is. Worse yet, Apple Mail is not kind to mail servers (see below). That’s why when you see a mail server straining, Mail just gives you an error that it couldn’t sync or move messages.

When it comes to benchmarking, sure, you could test things like launch speed and more. However, it’s more difficult to compare applications that operate differently, especially when Apple Mail is such an inconsistent performer, and often isn’t providing the same services.

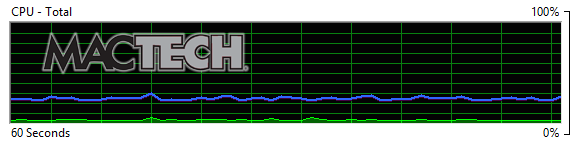

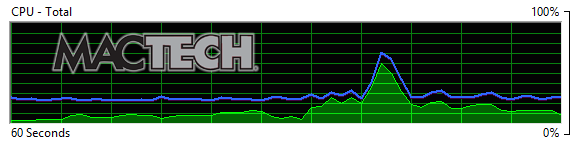

[nextpage title=”From the Server Perspective”]

Given that Microsoft makes Outlook, one would expect that Outlook works well with Microsoft Exchange servers. It was beyond the scope of this project to benchmark servers, but we wanted to see how different mail applications behaved from a “server” perspective. This is of particular interest to Enterprise and IT system administrators given they need to look at performance for the entire organization, as well as, the servers.

Below are four samples — screen shots of the CPU usage graphs on our test on premise Exchange 2013 server.

The first screen shot (figure 15) shows a baseline of the server — what it looks like when no clients are working syncing mail. Its “idle” state, if you will. The green line represents the CPU usage. The blue line is the “maximum frequency” which is essentially how fast the CPU is running (faster also means greater electricity usage).

The second, third and fourth samples show Mail.app, Outlook 2011, and Outlook 2016 each syncing an Exchange account, respectively.

As you can see, Mail.app (figure 16) does not consider it important to “be kind” to the Exchange server. It’s pretty clear here that Apple is not respecting the guidelines for mail clients talking to an Exchange server. They are breaking the rules (at least in how Microsoft Exchange is expecting a client to behave). Note the spike to 60% CPU usage — something we saw often.

Outlook 2011 does fairly well and is relatively even handed (figure 17), frequently hovering around 10% CPU usage.

Clearly, Outlook 2016 is the most respectful, and “kindest” to the Exchange server (figure 18). Outlook 2016 was so good to the Exchange server, that it often used only slightly more than the equivalent of an “idle” server.

Figure 15: Exchange Server CPU Usage – Baseline

(Green is CPU Usage, Blue is Maximum Frequency)

Figure 16: Exchange Server CPU Usage – Mail.app

(Green is CPU Usage, Blue is Maximum Frequency)

Figure 17: Exchange Server CPU Usage – Outlook 2011

(Green is CPU Usage, Blue is Maximum Frequency)

Figure 18: Exchange Server CPU Usage – Outlook 2016

(Green is CPU Usage, Blue is Maximum Frequency)

[nextpage title=”Conclusion”]

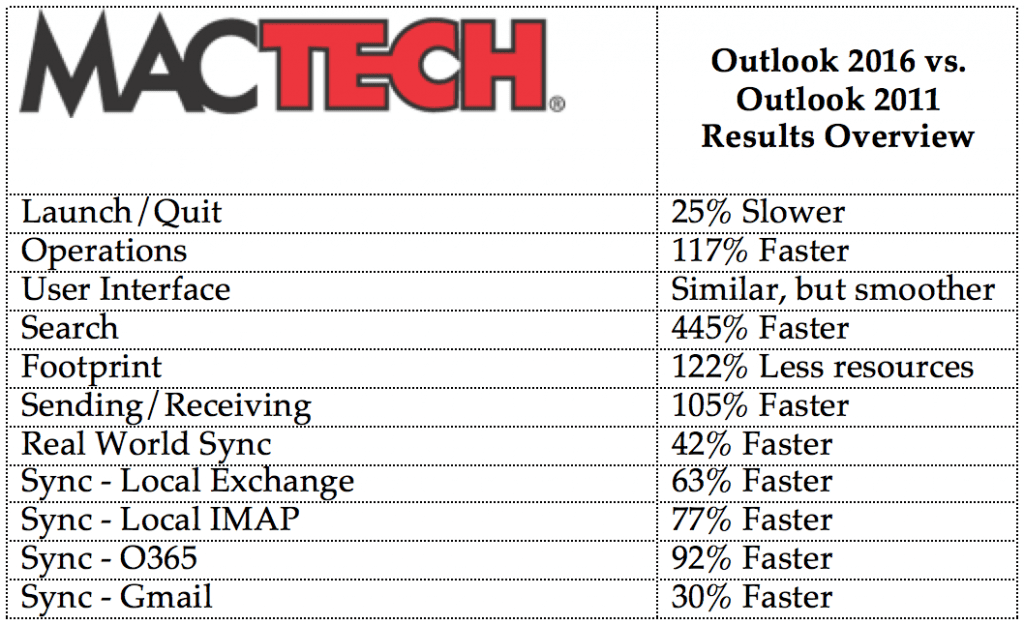

It’s difficult to make broad statements on how much faster an application is overall, but it’s what you likely want to know. To satisfy that, MacTech has taken a series of test results, and used a center weighted average to give you an idea of how much faster these applications are. See Table 1.

Table 1: Outlook 2016 vs. Outlook 2011

Explanation of “Faster”: “Faster” means speed and is meant to give users an indication of perceived speed. 50% faster means that it finished the task in 2/3 the time. 100% faster means that it was twice as fast, or that it finished in half the time.

Note: For many determinations, MacTech uses geometric means rather than averages to give a more center weighted, and therefore conservative, evaluation of aggregate speeds.

Said another way, in almost 75% of the tests run, Outlook 2016 was faster. In over half the tests, Outlook 2016 was much faster. In fact, in more than 1/3 of the tests, Outlook 2016 was double the speed or more.

Clearly, when it comes to syncing, there’s a big difference between messages with attachments and not. Outlook 2016’s three-pass method is more and more impressive as you see more messages with attachments.

No doubt about it. Performing faster in a majority of the tests we ran, Outlook 2016 is noticeably and significantly faster in a number of key areas compared to Outlook 2011. Add to this a far more stable and capable SQLite engine under the hood, it’s an upgrade that any Office user should seriously consider.

About the author…

Neil is the Editor-in-Chief and Publisher of MacTech Magazine. Neil has been in the Mac industry since 1985, has developed software, written documentation and has been heading up the magazine since 1992. When Neil writes a review, he likes to put solutions into a real-life scenario and then write about that experience from the user point of view. That said, Neil has a reputation around the office for pushing software to its limits and crashing software/finding bugs. Drop him a line at publisher@mactech.com